Blog / How to Build Scalable Data Storage for Analytics

How to Build Scalable Data Storage for Analytics

Building scalable data storage for analytics ensures your systems can handle growing data volumes, diverse sources, and complex queries without losing performance. For UAE businesses, this is critical due to rapid data growth from marketing campaigns, CRM systems, and seasonal spikes like Ramadan or the Dubai Shopping Festival. Scalable solutions allow seamless integration of new channels, support real-time dashboards, and manage costs effectively in AED.

Key Steps:

- Define Analytics Needs: Identify metrics like ROAS, seasonal trends, or lead sources.

- Choose the Right Storage: Options include data lakes for raw data or warehouses like Snowflake for structured reporting. A hybrid approach works best for GCC businesses.

- Leverage Cloud Platforms: Use tools like Google BigQuery or Azure Synapse for pay-as-you-go scalability.

- Set Up Data Pipelines: Use ETL/ELT methods and tools like Apache Kafka or AWS Glue to automate data flow.

- Monitor and Optimize: Partition data, cache frequent queries, and track costs in AED to manage performance and budgets.

By aligning your architecture with business goals, UAE companies can scale operations, maintain high performance during peak periods, and make data-driven decisions efficiently.

Matching Data Storage to Your Marketing Analytics Requirements

Defining Your Analytics Requirements

To make smart decisions, you first need to map out your marketing metrics. For UAE retail businesses, this often means real-time reporting on omnichannel interactions - everything from website clicks and mobile app usage to in-store purchases at the point of sale. Retailers also rely on historical data to track seasonal trends, such as those tied to Eid campaigns. Meanwhile, real estate companies have different needs. They combine CRM data with website analytics for historical reviews and require real-time updates to monitor market fluctuations, like the property boom that followed Expo 2020.

Retailers can gain an edge by tracking complete customer journeys. This includes integrating data from platforms like Google Analytics, social media, and CRM systems to create personalised offers that resonate with their audience. For real estate firms, pinpointing lead sources - whether from property portals, social ads, or email campaigns - is key. Analysing buyer journeys becomes even more critical in a market where shifts of 20–30% annually have been reported. Tools like Snowflake are particularly useful here, as they handle both structured transactions and semi-structured logs, making it easier to manage diverse data types.

Take Wick, for example. They manage over 1 million first-party data points for their clients by using Customer Data Platforms (CDPs). These platforms unify insights from behavioural tracking and journey mapping, turning fragmented information into a cohesive system. This approach not only supports predictive decision-making but also helps optimise data-driven strategies.

Once you’ve clearly defined your analytics metrics, the next step is ensuring your data storage solution can scale to meet these needs.

What Scalability Means for Marketing Data

Scalability is all about making sure your storage and computing resources grow effortlessly as your data volumes increase. Cloud warehouses like Google BigQuery, Amazon Redshift, and Azure Synapse take this challenge head-on. They use columnar storage and parallel processing to handle massive datasets - think petabyte-scale - and operate on pay-for-use models that automatically adjust based on demand. This flexibility, where storage and processing are decoupled, ensures that each component can scale independently as your requirements evolve.

For handling sudden increases in data, elastic storage solutions like data lakes are invaluable. They can manage everything from unstructured logs and images to structured sales data. Additionally, serverless architectures take infrastructure management off your plate entirely. This means marketing teams can focus on analysing data rather than worrying about storage capacity or technical overhead.

5 Steps to Build a Scalable Data Analytics Pipeline

Selecting the Right Storage Solution

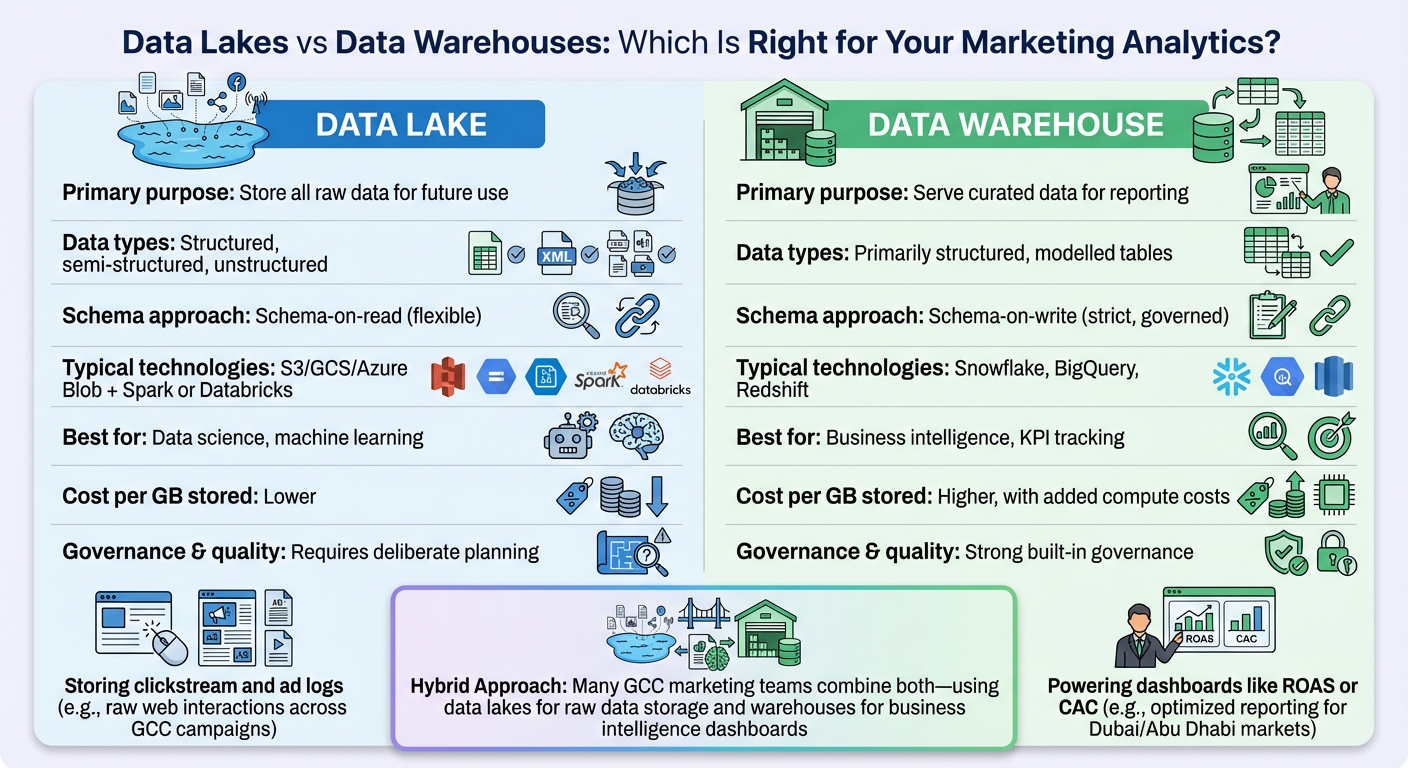

Data Lakes vs Data Warehouses: Key Differences for Marketing Analytics

Cloud Storage Platforms

When it comes to managing extensive marketing datasets, cloud storage platforms like Amazon S3, Google Cloud Storage, and Azure Blob Storage offer virtually unlimited capacity without requiring intricate planning. These services are ideal for handling high-volume data streams, such as clickstream data, ad impressions, and social media logs, while enabling multiple analytics tools - like BigQuery, Snowflake, or Databricks - to access the same data without duplication.

For marketing teams in the UAE and across the GCC, the decision often depends on the availability of regional data centres and how seamlessly the platform integrates with existing tools. For instance, a retailer heavily reliant on Google Ads might lean towards Google Cloud Storage paired with BigQuery for its seamless integration. On the other hand, organisations standardised on Microsoft tools may prefer Azure Blob Storage combined with Synapse Analytics. It’s also crucial to consider extra costs like data egress fees, API charges, and cross-region traffic. To optimise storage, lifecycle policies can be used to move older data to lower-cost tiers while keeping recent data on high-performance storage. Additionally, adopting open formats like Parquet and ORC can help reduce storage size and speed up queries - essential for analysing marketing campaign performance.

After selecting a cloud platform, the next step is to weigh the differences between data lakes and warehouses to find the best fit for your business needs.

Data Lakes vs Data Warehouses

A data lake is perfect for storing large amounts of raw, diverse data - such as unstructured social media posts, pixel logs, mobile app events, and exports from ad platforms - where the schema and use cases may evolve over time. Many GCC-based e-commerce brands start by ingesting raw events and third-party feeds into a cloud-based lake using open formats, later curating subsets for tracking specific metrics. However, without proper governance, a data lake can turn into a disorganised "data swamp."

In contrast, a data warehouse focuses on structured, curated datasets that are ready for business intelligence dashboards. This makes it ideal for tracking metrics like daily ad spend, impressions, conversions, and revenue by channel or market. For example, a UAE-based financial services company might store raw digital behavioural data in a lake for advanced analytics, while using a warehouse like Snowflake or BigQuery to provide leadership with cleaned, aggregated performance metrics.

| Aspect | Data Lake | Data Warehouse |

|---|---|---|

| Primary purpose | Store all raw data for future use | Serve curated data for reporting |

| Data types | Structured, semi-structured, unstructured | Primarily structured, modelled tables |

| Schema approach | Schema-on-read (flexible) | Schema-on-write (strict, governed) |

| Typical technologies | S3/GCS/Azure Blob + Spark or Databricks | Snowflake, BigQuery, Redshift |

| Best for | Data science, machine learning | Business intelligence, KPI tracking |

| Cost per GB stored | Lower | Higher, with added compute costs |

| Governance & quality | Requires deliberate planning | Strong built-in governance |

| Example use case | Storing clickstream and ad logs | Powering dashboards like ROAS or CAC |

For many advanced marketing teams in the GCC, a hybrid approach - combining a data lake and warehouse (or a lakehouse) - is becoming more common. This setup supports both data science experiments and business intelligence needs.

Storage Costs in the UAE

Once you’ve chosen the right storage solution, managing costs becomes a key focus.

In the UAE, estimating cloud storage costs involves more than just the base rates. Since most cloud contracts are priced in USD, businesses should account for currency fluctuations when planning budgets in AED. Selecting cloud regions closer to primary users can reduce latency, though pricing may differ compared to regions in Europe or the US.

To create a multi-year storage budget, start by analysing current daily and weekly data volumes from key sources like ad platforms, website and app analytics, CRM systems, and e-commerce platforms. For digital-first businesses in the GCC, data volumes often grow by 30–50% annually. It’s also worth modelling retention strategies - for example, keeping 12 months of data in high-performance storage while archiving older data in cold storage for three to five years.

Cost-saving measures include:

- Using columnar formats and partitioning data to ensure queries only scan relevant sections.

- Employing decoupled architectures to scale resources up during peak periods - like the Dubai Shopping Festival or major campaign launches - and scale down afterwards.

- Tiering older data to lower-cost storage options.

- Limiting interactive queries to the most recent 6–18 months to balance performance with cost efficiency.

Building Data Pipelines That Scale

Once you’ve set up scalable storage, the next big step is figuring out how to move data smoothly and efficiently from various marketing platforms into that storage. A well-built pipeline pulls raw data from sources like Google Ads, Meta Ads, Salesforce CRM, and website analytics, then prepares it for immediate reporting or long-term analysis. The trick is to design pipelines that can handle increasing data volumes without constant tinkering - something especially critical for GCC businesses dealing with seasonal spikes.

Setting Up ETL and ELT Processes

ETL (Extract, Transform, Load) and ELT (Extract, Load, Transform) are two key methods for transferring marketing data into your analytics setup. With ETL, data goes through transformations - like converting ad spend to AED or standardising date formats to DD/MM/YYYY - before it’s stored. This approach works well when you need to anonymise sensitive information or enforce strict business rules before data lands in your storage, which is often the case for financial services in the UAE.

ELT, on the other hand, takes raw data straight from platforms and loads it into a data lake or warehouse. The heavy lifting of transformation happens later, using the cloud platform’s computing power. For marketing teams managing large amounts of unstructured data - like posts from Instagram or TikTok during a campaign - ELT is more flexible. Cloud warehouses like BigQuery, Snowflake, and Redshift allow you to scale computing resources up during peak periods, such as a product launch, and scale down when things quiet down.

For businesses in the GCC, the choice between ETL and ELT often depends on local data residency rules and how complex your data transformations are. If you’re operating in AWS Middle East (UAE) or Azure UAE North regions, ensure your transformation processes stay within the same region to reduce latency and comply with local data governance standards.

Data Ingestion and Integration Tools

Getting data from marketing platforms into your storage requires solid ingestion tools. Apache Kafka is a great choice for streaming high-speed data, such as live website clicks, ad impressions, or mobile app events, directly into your analytics system in real time. For instance, a Dubai-based e-commerce retailer could use Kafka to handle social media interactions and clickstream data during a busy sales event, ensuring no events are missed. This real-time capability is especially useful for personalisation engines that need to respond to customer behaviour instantly.

For those looking for a more visual approach, Apache NiFi provides a drag-and-drop interface to design data flows. It’s handy for routing data from point-of-sale systems, email platforms, and other sources without needing to write much code. It also includes ready-made processors for tasks like format conversion and data validation. Another option is AWS Glue, which offers serverless ETL capabilities. Glue can automatically catalogue and transform marketing data stored in S3, making it easy to integrate with other AWS tools. For example, UAE retailers can use Glue to combine Shopify sales data with Google Analytics, apply currency conversions to AED, and load it into Redshift or Athena for analysis - all without worrying about managing infrastructure.

The choice of tool depends on your specific needs. Kafka is ideal for real-time streaming, NiFi is excellent for complex routing between different systems, and managed services like Glue or Google Cloud Dataflow are perfect when you want to focus on analytics instead of infrastructure. This approach naturally leads to a modular pipeline structure, where each function can scale independently.

Creating Modular Pipeline Components

Once you’ve nailed data ingestion and integration, the next step is designing modular pipelines. This approach breaks your pipeline into separate components - for example, one for ingesting Meta Ads data, another for processing revenue data, and a third for storing curated tables. The advantage? You can scale each component independently. If your social media data doubles during a UAE National Day campaign, you can increase ingestion capacity without disrupting other parts of the pipeline, like historical data storage or transformation processes.

To achieve this, you can define microservices for each function. Use Kafka connectors for data ingestion, tools like Apache Spark or Databricks for processing, and storage solutions like Delta Lake or Iceberg. Containerising these components with Kubernetes allows them to scale automatically based on demand. By setting up APIs between components, you ensure they remain loosely connected, so you can upgrade or replace individual pieces without overhauling the entire system.

For marketing teams in the GCC, modular design also supports hybrid workflows. You might run daily batch jobs in BigQuery for regulatory reports while simultaneously streaming live e-commerce metrics through Kafka for real-time dashboards. This setup gives you the flexibility to balance cost, performance, and business needs without being tied to a single processing method.

sbb-itb-058f46d

Improving Storage Performance and Monitoring

Once your data pipelines are in place, the next step is to fine-tune your storage setup. This ensures faster queries, timely reports, and better cost management, especially during peak periods.

Methods to Improve Performance

One effective strategy is to partition your fact tables by date so queries only scan the relevant sections. For example, if your team in Dubai generates a weekly campaign performance report, the system will only need to access seven daily partitions. Similarly, clustering or sorting tables by commonly used filter columns - like campaign ID, channel, or country - further limits the amount of data scanned. Combining these techniques with columnar storage and compression reduces input/output (I/O), cuts storage costs, and speeds up query performance.

For dashboards that marketing leaders frequently rely on, caching plays a key role. Using cached query results or materialized views for complex calculations - such as daily spending, ROAS by channel, or funnel metrics - can bring query times down from seconds to milliseconds. This not only improves the user experience but also reduces compute costs by avoiding repetitive, resource-heavy queries.

Another important aspect is workload management. Separating ELT batch jobs, ad-hoc analyses, and production dashboards into different compute clusters or queues ensures that heavy data transformations don’t delay critical reports. This way, key dashboards for leadership remain unaffected by intensive operations running in the background.

Once these performance improvements are in place, the focus shifts to monitoring them effectively to ensure long-term reliability.

Monitoring Tools and Methods

Optimising storage is just the beginning - ongoing monitoring is essential as your data scales. A strong monitoring framework should cover three key areas: platform health, data quality, and business service levels. On the infrastructure side, keep an eye on metrics like query latency (e.g., P95 and P99 percentiles), throughput, CPU and memory usage, and storage growth. These indicators help identify under-provisioned resources or bottlenecks caused by specific query patterns.

Cloud providers like AWS, Google Cloud, and Azure offer built-in monitoring tools - such as AWS CloudWatch, Google Cloud Monitoring, and Azure Monitor - that track warehouse and storage metrics. These tools can also trigger alerts when thresholds are exceeded. For a more unified view across systems, you can integrate these with platforms like Datadog, Grafana, or Prometheus. These tools consolidate metrics from your warehouses, orchestration tools (like Airflow), and streaming systems into a single, easy-to-read dashboard.

Monitoring data quality is just as critical. Automated checks for anomalies - such as sudden drops in daily ad spend or missing impression data - can flag upstream issues before they impact reports. Keeping an eye on schema changes and ensuring referential integrity between campaign metadata and performance records further ensures reliable reporting.

For UAE organisations, data freshness is a key priority. If your pipeline is expected to load yesterday’s campaign data by 08:00 GST, your monitoring system should alert the team if there’s any risk of delay. To avoid overwhelming your team with alerts, route high-volume notifications to engineers while sending only critical updates - like “today’s performance dashboards are delayed” - to business stakeholders. This approach helps prevent alert fatigue, even during busy campaign periods.

Managing Growth Within Budget

As your data needs grow, planning for capacity becomes essential. Whether you’re adding new advertising platforms, tracking more granular events, or entering new markets, it’s important to forecast storage needs based on current ingestion patterns. Elastic architectures that separate compute from storage allow you to temporarily scale resources during periods of heavy analysis.

To manage costs, consider implementing lifecycle policies for object storage. For example, move data older than 12 months to less expensive, infrequent-access tiers. Compressing historical partitions can also lower costs without affecting the performance of queries on recent data.

Since cloud bills are typically issued in USD, UAE organisations should collaborate with finance teams to convert expected costs into AED. Using an agreed-upon exchange rate with a buffer for fluctuations ensures better cost planning. Setting monthly AED cost caps for storage and compute, along with alerts when 70–80% of the budget is used, allows for timely adjustments before the billing cycle ends.

Regularly reviewing cost monitoring tools and billing reports can highlight areas for optimisation. Focus on the most expensive queries, users, and datasets to identify opportunities for improvement, such as rewriting inefficient reports or consolidating redundant tables. Partners like Wick can assist in setting cost guardrails - such as budget alerts, spending caps, and right-sized clusters - that align with your financial goals while accommodating busy marketing periods.

How Wick Builds Scalable Storage Solutions

Wick's Four Pillar Framework

Wick's Four Pillar Framework combines digital marketing expertise with a robust 'Capture & Store' pillar, designed to transform scattered data into scalable storage solutions. By utilising behavioural tracking and customer journey mapping, this approach integrates tools like CDP implementation, data analytics, audience segmentation, and performance tracking. With this setup, businesses can efficiently manage over 1 million first-party data points.

For companies in the UAE, this means implementing cloud-native platforms such as Snowflake or BigQuery. These solutions help marketing teams handle rapidly growing datasets from campaigns without sacrificing query speeds or exceeding budgets in AED. This directly addresses the challenges of managing increasing data volumes and ensuring high performance, which are critical for businesses in the region.

Tailored Solutions for UAE Companies

Wick's solutions are customised to address the specific needs of businesses in the GCC. This includes integrating security measures like encryption and role-based access control to comply with the UAE's PDPL data privacy laws. For instance, a Dubai-based e-commerce company successfully scaled its operations during Black Friday by leveraging Apache Kafka, BigQuery, and Delta Lake. This setup enabled real-time ingestion and schema evolution, supporting a 10x growth in demand while maintaining query speeds under 5 seconds and keeping monthly costs below AED 50,000.

Wick also customises ETL/ELT pipelines using tools like AWS Glue, allowing businesses to seamlessly incorporate local payment data formatted in AED (with comma thousand separators) and Arabic-language campaign metrics. By adopting pay-as-you-go models, companies can ensure cost efficiency while maintaining the flexibility to adapt to changing needs.

Supporting Long-term Business Growth

Wick extends its scalable solutions to promote long-term growth by utilising API-first cloud solutions and lakehouse architectures such as Databricks. These systems allow businesses to scale effortlessly from 1TB to petabyte-sized datasets without experiencing downtime. This adaptability ensures marketing teams can integrate new data sources as companies expand across the GCC, enabling the use of AI models and predictive marketing strategies.

To maintain performance and data integrity, Wick incorporates observability tools for pipeline health monitoring, data quality alerts, and lineage tracking. Additionally, query optimisation is configured with metric units for data volumes, DD/MM/YYYY date formats in dashboards, and automated budget management. This reliable foundation has helped companies like Forex UAE and Hanro Gulf achieve comprehensive analytics tracking and enhanced performance, paving the way for sustained digital growth in the UAE market.

Conclusion

Creating scalable data storage for marketing analytics isn’t about chasing the latest tools - it’s about ensuring your infrastructure aligns with your specific business objectives. Start by defining your key marketing metrics and setting clear performance service-level agreements (SLAs). These foundational requirements should guide your decision between options like data lakes, cloud warehouses, or hybrid solutions.

Once your priorities are clear, choose technology that directly supports those goals. Platforms such as BigQuery, Snowflake, or Redshift are excellent choices for UAE businesses, offering the flexibility to scale as data grows from gigabytes to terabytes while managing costs in AED. Combine these systems with automated data pipelines and strong monitoring tools to handle peak loads without compromising performance.

For many UAE organisations, building such capabilities in-house can be time-consuming and resource-intensive. Collaborating with experienced partners who understand both modern data architecture and the unique needs of the region can speed up implementation and minimise risks. Wick’s tailored frameworks, for example, align infrastructure with measurable marketing outcomes, ensuring your investment delivers real value.

When designed correctly, your storage and data pipelines enable marketing teams to make faster decisions, improve return on ad spend (ROAS), lower customer acquisition costs, and scale operations efficiently. With the global big data and business analytics market projected to reach USD 745–800 billion by 2030, the opportunities are immense.

Kick off with a strategic roadmap and a collaborative workshop to align your data infrastructure with your growth ambitions. Evaluate your current stack against the principles outlined in this guide, map out a phased plan, and decide whether to build in-house or partner with specialists like Wick. This ensures your investment supports sustainable growth in the UAE market.

FAQs

What is the difference between data lakes and data warehouses in marketing analytics?

Data lakes are built to hold raw, unstructured data, making them a great fit for managing massive and varied datasets like customer interactions, social media activity, or web logs. They offer the flexibility needed for exploring and analysing data in ways that can reveal new insights, particularly in marketing analytics.

On the other hand, data warehouses focus on storing processed, structured data that’s neatly organised and tailored for quick querying and reporting. This makes them ideal for creating consistent and dependable reports, such as campaign performance overviews or sales predictions. Depending on your specific marketing analytics goals, both systems can work together to provide a more comprehensive solution.

How can businesses in the UAE optimise cloud storage costs in AED?

To keep cloud storage expenses under control in the UAE, businesses can take a few practical steps:

- Select the right provider: Look for one that offers competitive rates in AED and matches your specific business needs.

- Opt for pay-as-you-go plans: This helps you avoid paying for storage you don’t actually use.

- Compress your data: Reducing file sizes can significantly cut down storage demands and costs.

- Conduct regular audits: Go through your stored data periodically to remove outdated or unnecessary files.

- Embrace scalable storage options: Flexible storage solutions let you adjust capacity as your requirements change, ensuring you only pay for what you need.

On top of these strategies, negotiating with your provider for better terms can help you secure cost-effective storage solutions tailored to your business goals.

What are the key steps to create scalable data pipelines for marketing analytics?

To create scalable data pipelines for marketing analytics, the first step is to design a modular system that supports both growth and easy maintenance. Choose reliable data ingestion frameworks capable of managing large data volumes smoothly. Automating tasks like data cleaning and transformation is essential to keep data quality consistent and reduce manual effort.

Consider cloud storage options like data lakes or warehouses, which offer flexibility and cost savings. Real-time data processing can provide faster and more actionable insights. It's also critical to prioritise data security and compliance, especially in the UAE, to meet local regulatory standards. Lastly, integrate AI-driven tools to refine decision-making processes and continuously monitor your pipeline’s performance to boost efficiency and manage costs effectively.