Blog / Top AI Ethics Challenges in GCC Marketing

Wick

January 11, 2026Top AI Ethics Challenges in GCC Marketing

Artificial intelligence is transforming marketing in the GCC, with 84% of organisations integrating AI by the end of 2025 compared to 62% in 2023. While AI enhances workflows through tools like predictive audience modelling and dynamic ad creative, it introduces ethical challenges tied to local values, data privacy, and regulatory compliance. Here's what you need to know:

- Key Issues: Algorithmic bias, overcollection of data, lack of transparency in AI decisions, and misalignment with local norms.

- Regulations: UAE's 2024 AI Charter and Personal Data Protection Law (PDPL) set strict requirements for transparency, data protection, and respecting cultural sensitivities.

- Ethical Risks: Discriminatory targeting, misuse of personal data, and hyper-personalisation that manipulates consumer behaviour.

- Solutions: Strong governance, bias audits, and human oversight to ensure AI-driven marketing aligns with GCC laws and values.

AI in marketing offers opportunities but requires careful management to avoid ethical lapses. Businesses must prioritise compliance and trust to thrive in this evolving landscape.

AI in Omnichannel Campaign Workflows: Benefits and Risks

How GCC Marketers Use AI in Workflows

Marketers in the GCC region are increasingly leveraging AI to fine-tune their campaign processes and achieve greater precision in targeting. One standout application is dynamic creative routing, which automatically identifies and delivers the most suitable ad variations to specific audience segments. Another key tool is predictive audience scoring, which forecasts high-conversion prospects even before campaigns are launched.

AI is also revolutionising content creation, generating both text and visuals, while programmatic ad buying uses real-time bidding algorithms to optimise media spending across multiple channels. By the end of 2025, 60% of GCC organisations had either adopted or were testing agentic AI. This advanced AI technology doesn’t just generate content; it coordinates tasks, evaluates outcomes, and fine-tunes strategies, pushing workflows into a new realm of sophistication. However, these advancements come with their own set of ethical challenges that demand immediate attention.

Ethical Risks of AI in Marketing

Greater automation in marketing workflows introduces serious ethical concerns. One of the most pressing issues is algorithmic bias, where AI systems trained on incomplete or biased datasets can lead to discriminatory practices, such as unfairly excluding certain consumer groups. Moreover, many AI systems operate as "black boxes", making it difficult for both marketers and consumers to understand the logic behind decisions like personalised pricing or targeting.

Data privacy risks are another significant challenge. These AI systems often require extensive amounts of personal data, leading to concerns about overcollection, unauthorised use, and potential data breaches. Such issues go beyond existing regulatory safeguards. The rise of hyper-personalisation adds another layer of complexity, as it can exploit psychological triggers to manipulate consumer behaviour. Finally, the lack of robust accountability mechanisms means that when ethical lapses occur in automated processes, it’s often unclear who is responsible for addressing them.

AI Is Already More Intelligent Than Humans - And Why That Should Concern Us

Ethical Considerations Specific to the GCC

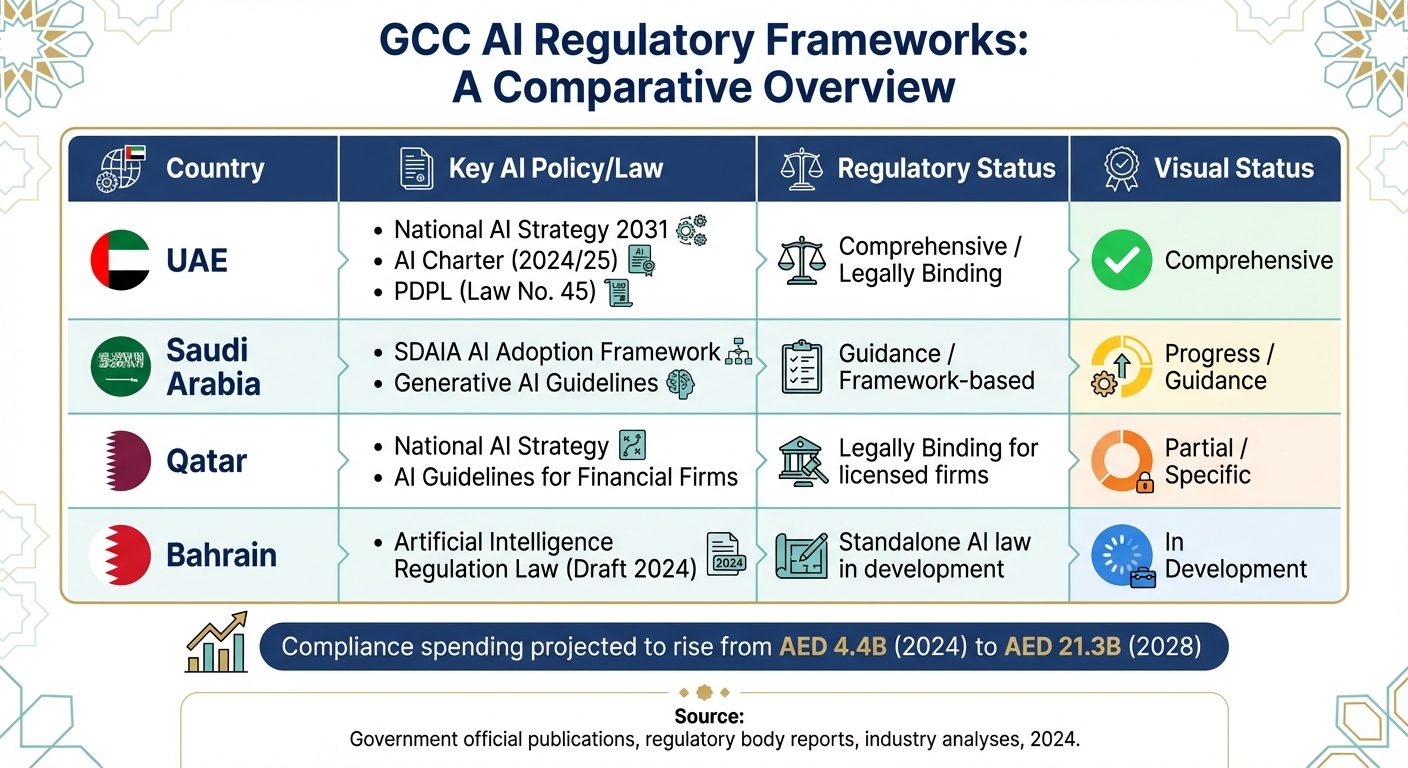

GCC AI Regulatory Frameworks Comparison by Country

Respecting Local Values and Religious Norms

In the GCC region, AI marketing strategies must go beyond technical efficiency to align with local cultural and religious values. The UAE Charter for the Development and Use of Artificial Intelligence underscores that innovation should serve the public good while safeguarding fundamental rights. This means AI systems must carefully balance automation with essential human oversight.

Sensitive data, such as religious beliefs and ethnic origin, is tightly governed under UAE Federal Decree-Law No. 45 of 2021. When profiling such data, organisations should appoint a skilled Data Protection Officer to ensure compliance. The Charter also highlights the irreplaceable role of human judgment in AI processes:

"human judgment and human oversight over AI"

This principle is critical for aligning AI outputs with ethical standards and addressing potential biases in automated systems.

Additionally, ethical AI frameworks in the GCC prioritise diversity and non-discrimination. To meet these standards, digital marketing strategies must incorporate ethical principles that avoid discriminatory practices in audience segmentation.

Regulatory Frameworks and Compliance

Beyond cultural considerations, the GCC has established robust regulatory frameworks to guide ethical AI usage. The UAE leads the way with initiatives like the National AI Strategy 2031, the AI Charter (introduced on 10 June 2024), and the legally binding Personal Data Protection Law (Law No. 45), which took effect on 2 January 2022. Saudi Arabia, through SDAIA's AI Adoption Framework, and Qatar, with its legally binding AI Guidelines for financial firms, have also developed frameworks to regulate AI practices.

| Country | Key AI Policy/Law | Regulatory Status |

|---|---|---|

| UAE | National AI Strategy 2031; AI Charter (2024/25); PDPL (Law No. 45) | Comprehensive / Legally Binding |

| Saudi Arabia | SDAIA AI Adoption Framework; Generative AI Guidelines | Guidance / Framework-based |

| Qatar | National AI Strategy; AI Guidelines for Financial Firms | Legally Binding for licensed firms |

| Bahrain | Artificial Intelligence Regulation Law (Draft 2024) | Standalone AI law in development |

The UAE has further enhanced its regulatory landscape with the introduction of a regulatory intelligence ecosystem in April 2025. This system employs machine learning to connect laws with practical applications, cutting legislative drafting time by up to 70%. Businesses operating in free zones like DIFC and ADGM must also adhere to specific data protection rules. For instance, ADGM imposes a maximum administrative fine of US$28 million for non-compliance, while DIFC levies a US$50,000 fine for failing to appoint a Data Protection Officer.

Another key consideration is the right of UAE consumers to object to automated decision-making. This legal right must be integrated into all AI-driven marketing workflows. These regulations not only ensure compliance but also set the stage for tackling the evolving ethical challenges in AI marketing across the GCC.

sbb-itb-058f46d

Top Ethical Challenges in GCC AI Marketing

Biased Targeting and Discriminatory Segmentation

One major ethical concern in AI-driven marketing across the GCC is algorithmic bias. When AI models rely on incomplete or skewed datasets, they risk excluding certain demographics or creating discriminatory audience segments, which is particularly problematic in the region's diverse population. The UAE Charter for AI highlights this issue, stressing the importance of inclusivity and respect for individual differences.

The problem is further complicated by the lack of transparency in AI models. This opacity prevents marketing teams from fully understanding how targeting algorithms operate, making it challenging to detect and address bias. For companies operating in free zones like the DIFC, this becomes even more critical. Article 10 of the DIFC Data Protection Regulations requires transparency in automated decision-making and profiling. Ignoring these issues could also lead to violations of the UAE's Consumer Protection Law (Federal Law No. 15 of 2020).

Overcollection and Misuse of Personal Data

The pursuit of hyper-personalisation in marketing has led to an overwhelming collection of consumer data in the GCC. With AI tools processing growing volumes of personal information, privacy concerns have escalated. These practices often run the risk of breaching regional data protection laws, such as the UAE Personal Data Protection Law (Law No. 45) and Saudi Arabia's PDPL. Striking a balance between collecting enough data for AI training and adhering to these legal frameworks is a critical challenge for organisations.

Lack of Transparency in AI Decisions

The lack of clarity in how AI systems make decisions is another significant issue. Whether it’s ad targeting, content personalisation, or budget allocation, the decision-making process often remains a mystery, which can erode trust. Without transparency, it’s difficult to ensure that AI-driven outcomes meet ethical standards. A 2025 study examining 85 documents, including legal and academic texts, found that while transparency is essential for building trust, most AI systems are too complex to offer clear explanations.

For marketers in the GCC, this challenge is amplified by regulatory expectations. The UAE Charter underscores the importance of human oversight in AI systems to address errors or biases. This human involvement is essential to bridge the gap between technical complexity and ethical accountability.

Consent and User Preferences

Consumer rights are another area of concern, particularly when it comes to obtaining clear and informed consent. As AI-driven campaigns span multiple platforms, ensuring that user preferences are respected across all channels is vital. Robust consent mechanisms aligned with regional data protection laws are necessary to maintain transparency and uphold consumer trust.

Misalignment with Local Values and Religious Practices

Ensuring that AI-generated content aligns with local values and religious practices is a unique challenge in the GCC. The UAE Charter for AI mandates that content must respect cultural and religious norms, particularly during significant periods like Ramadan or Eid. It emphasises the importance of "Human Commitment", advocating for human oversight to ensure AI outputs respect individual dignity and rights. Without this oversight, AI systems trained on global datasets may produce content that, while technically accurate, is culturally inappropriate.

This issue is made more complex by a lack of preparedness among organisations. A recent survey found that 75% of GCC executives admit their companies lack effective AI change management programmes. Addressing these ethical challenges requires a strong framework for oversight and a commitment to aligning AI processes with regional values.

Implementing Ethical AI in GCC Marketing

Governance and Risk Management

Effective governance is the backbone of ethical AI practices. For marketers across the GCC, this means appointing dedicated AI ethics officers or setting up cross-functional ethics committees to oversee how AI tools are implemented and scaled.

Bias audits are a key step in this process. By using fairness constraints and diverse datasets, marketers can identify and address discriminatory patterns in audience segmentation. Tools that log decision paths and confidence scores also ensure AI decisions are clear and auditable. One useful practice is creating "bias heatmaps", which highlight areas where algorithms might unintentionally exclude certain groups or produce unfair results. For example, in November 2025, the Saudi Data & AI Authority (SDAIA) mandated ethical impact assessments for all government AI systems. This includes applications in areas such as healthcare diagnostics and judicial support, ensuring compliance with the country’s responsible AI standards.

Similarly, the Dubai Digital Authority (DDA) has been at the forefront of AI governance. Starting in 2025, the DDA required explainability for high-risk AI systems in sectors like banking and transportation, cementing the UAE’s reputation as a global leader in AI oversight. The financial commitment to these governance measures is significant - spending in the GCC on AI compliance and safety is projected to rise from AED 4.4 billion in 2024 to AED 21.3 billion by 2028.

"Ethical AI is not optional - it is the foundation of the GCC's AI strategy." - Softdroom AI

These governance initiatives not only set a strong ethical foundation but also directly tackle risks like bias, lack of transparency, and non-compliance.

Building Ethical AI Workflows with Wick

Once governance structures are in place, the next step is embedding ethics into everyday operations. Wick’s Four Pillar Framework offers a comprehensive approach to integrating ethical safeguards into marketing workflows.

- Build & Fill: This pillar ensures that content creation and website development align with local values right from the start.

- Plan & Promote: Here, bias-free targeting is prioritised in SEO strategies and paid advertising campaigns.

- Capture & Store: Data minimisation and adherence to UAE and Saudi data protection laws take centre stage through responsible analytics and customer journey mapping.

- Tailor & Automate: AI-driven personalisation is used with transparent and auditable decision paths, ensuring that automated processes remain traceable and culturally considerate.

By following this framework, GCC marketers can maintain human oversight while scaling AI capabilities. Governance checkpoints integrated across all four pillars allow organisations to rigorously test high-risk campaigns before launch, ensuring alignment with regional norms. Additionally, this approach supports the transition to explainable AI systems, moving away from opaque "black box" models to workflows where every decision path is fully traceable.

This structured methodology not only enhances operational transparency but also ensures that AI-powered marketing efforts remain ethical and aligned with local expectations.

Conclusion

Recent findings reveal a notable gap between the adoption of AI and its effective scaling across the GCC region. To bridge this divide, marketers in the GCC must rethink how they design and scale AI-driven campaigns, particularly when addressing ethical challenges. These include mitigating algorithmic bias, protecting data privacy, and ensuring decision-making processes align with local cultural norms.

Building trust is a cornerstone of success in this space. As Robert Ptaszynski, Partner and Head of Digital and Innovation at KPMG Middle East, explains:

"By building AI systems that are transparent, inclusive, and human-centric, businesses can unlock new opportunities, gain stakeholder trust, and differentiate themselves in an increasingly AI-driven economy".

This trust is especially critical in a region where AI could contribute up to $150 billion in economic value, accounting for approximately 9% of the GCC's total GDP.

The regulatory environment is also evolving rapidly. Bahrain is drafting standalone AI regulations, and Qatar has introduced legally binding AI guidelines for financial institutions. These developments highlight the importance of embedding ethical practices into all aspects of AI marketing. Organisations that prioritise ethical principles now will be better equipped to adapt to these regulatory changes while avoiding costly compliance issues.

In the UAE, guidelines emphasise the need for human oversight to address errors and biases. This is particularly vital for marketing campaigns, which must balance respecting local values and religious traditions with delivering personalised, scalable experiences.

FAQs

What are the key ethical challenges of using AI in marketing within the GCC region?

The integration of AI into marketing strategies across the GCC comes with a set of ethical hurdles that businesses need to navigate carefully, particularly to align with local values and regulations. One critical issue is bias in AI algorithms. When algorithms unintentionally favour or exclude certain groups, it can lead to unfair practices, damaging trust and clashing with ethical marketing standards.

Another pressing concern is the lack of transparency in how AI systems make decisions. This opacity can leave both consumers and regulators in the dark about how outcomes - like targeted ads or personalised recommendations - are generated, raising questions about accountability.

Privacy also takes centre stage, especially when personal data is collected and analysed for customised advertising. Companies operating in the UAE must ensure strict adherence to local data protection laws to avoid legal pitfalls. On top of that, security risks tied to AI systems, such as vulnerabilities to cyberattacks, could jeopardise sensitive information, affecting both businesses and their customers.

Lastly, there’s the ethical dilemma of manipulating consumer behaviour. Overly personalised or misleading content can exploit consumer trust, potentially leading to scepticism towards brands and their intentions.

By tackling these challenges head-on and committing to ethical practices, businesses in the GCC can use AI responsibly, ensuring both consumer trust and long-term growth in their marketing initiatives.

What are the UAE's regulations on AI transparency and data privacy?

The UAE has rolled out detailed regulations to ensure AI systems operate transparently while safeguarding data privacy. The AI Ethics Principles & Guidelines, developed by the Ministry of State for Artificial Intelligence, set clear expectations for organisations. These include disclosing when AI is being used, explaining the logic behind automated decisions, and keeping records of algorithmic outcomes. The guidelines also stress the importance of privacy-preserving AI, requiring practices like minimising data usage, obtaining consent for data processing, and implementing robust protections for personal information.

On top of this, the UAE Personal Data Protection Law (Federal Decree Law No. 45 of 2021) enforces stringent rules for handling personal data. It mandates explicit consent for data collection, gives individuals the right to request corrections or halt data processing, and outlines strict conditions for transferring data internationally. For businesses leveraging AI in marketing, aligning with these regulations is essential - not just for compliance, but also to earn and maintain user trust.

How can marketers ensure AI aligns with cultural values in the GCC region?

To ensure AI aligns with the cultural values of the GCC region, marketers should adopt approaches that honour local ethics and traditions.

- Adhere to UAE AI ethics guidelines: Following principles such as transparency, fairness, and accountability helps ensure AI systems are both inclusive and culturally aware. Using self-assessment tools can identify risks and prevent potential issues before campaigns are rolled out.

- Customise content for local audiences: Tailor messaging in Arabic, incorporating the Emirati dialect where suitable, and ensure visuals and symbols respect Islamic traditions and the UAE’s national identity. Collaborating with local cultural experts can refine campaigns and avoid cultural missteps.

- Respect privacy and data protection: Building trust requires safeguarding user privacy and implementing strong data protection measures, aligning with the ethical standards upheld in the UAE.

By embedding these strategies into their efforts, marketers can balance technological advancement with cultural respect, creating stronger connections and trust within the region.